Replacing managers with an AI World Model

Can AI completely replace managers?

Jack Dorsey and Roelof Botha published “From Hierarchy to Intelligence” on March 31, 2026. It is a serious piece of thinking. The historical framing is sharp, the diagnosis of why hierarchies exist is largely correct, and the argument that AI changes the information-routing constraint is real.

But the essay is about one-third of what managers actually do. It treats that one-third as the whole job, removes the people doing it, and calls the problem solved. The other two-thirds are still there. They just don’t have anyone doing them anymore.

To understand that better, we need to be precise about what managing actually is.

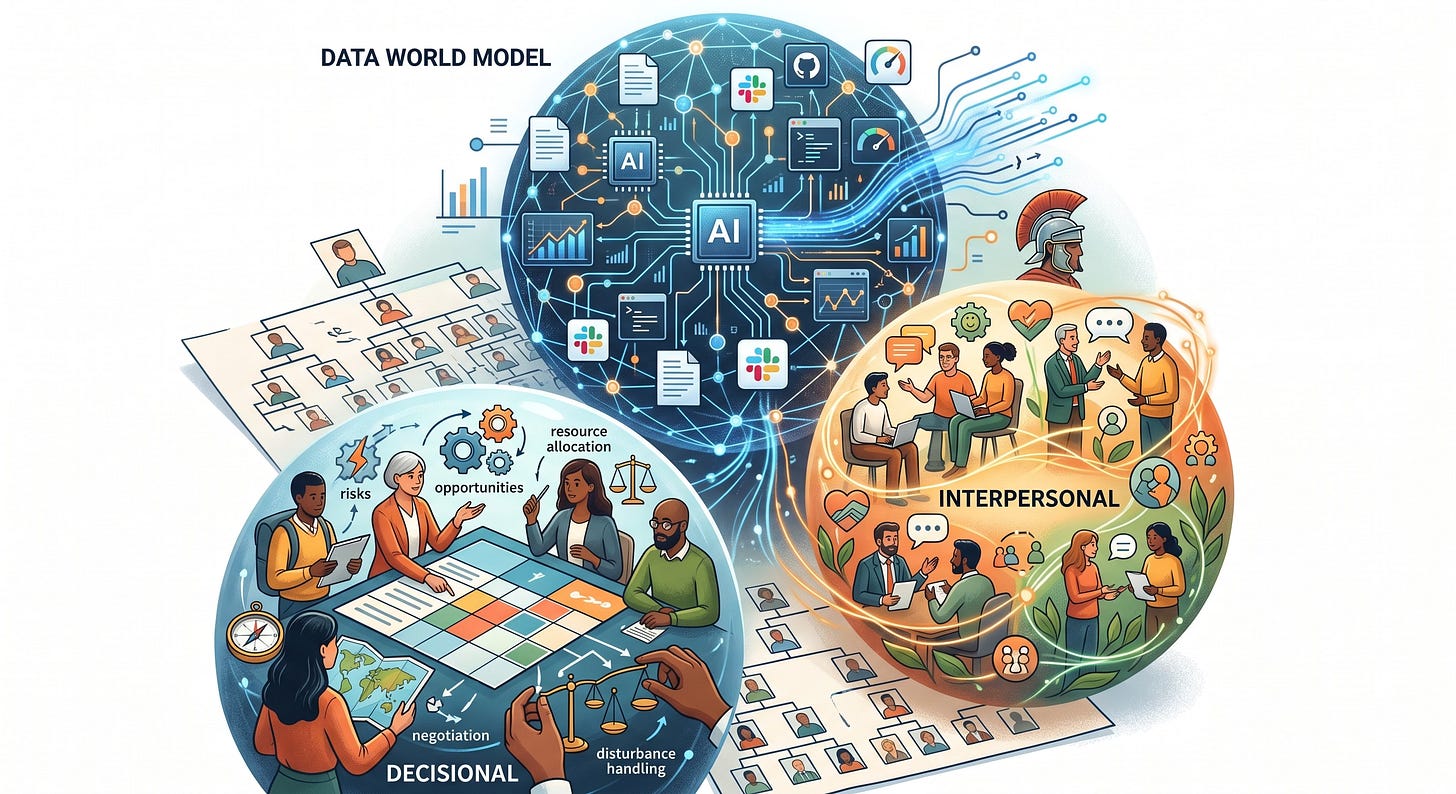

Three Clusters, Not One

In 1973, Henry Mintzberg published The Nature of Managerial Work, based on direct observation of what managers do with their time. His finding was that managerial work falls into three clusters: informational, interpersonal, and decisional.

The informational cluster is what Dorsey and Botha are talking about. Managers monitor what’s happening, disseminate that information across the organization, and represent the team to the outside world. This is the information routing function. It’s the layer that hierarchy was built to support, and it’s the layer that AI can now perform continuously, at scale.

That’s true. But it’s only one of three.

What AI Cannot Route

The interpersonal cluster covers things that depend on trust, relationship, and human accountability. The leader motivates and develops people, figures out who has potential and what they need to grow, and has the difficult conversations.

The liaison role involves building relationships across organizational boundaries, the kind of connective tissue that makes cross-functional work actually work. The figurehead role is about legitimacy and accountability. When something goes wrong, someone needs to be responsible in a way a system cannot be.

A telling data point from the Block restructuring itself: current and former employees told The Guardian that roughly 95% of AI-generated code changes still require human modification. This is in a remote-first, highly digital, machine-readable organization, exactly the environment that Dorsey describes as most amenable to this model. The humans are still in the loop not because the information system failed, but because the work itself requires judgment that isn’t in the data.

The decisional cluster is where this becomes even clearer. Mintzberg’s entrepreneur role involves sensing and acting on opportunities that aren’t visible yet. A world model, by definition, can only reflect what has already happened. It cannot tell you what to build that doesn’t exist yet. The disturbance handler role is about responding to crises and genuinely novel situations, exactly the circumstances where pattern-matching on historical data is most likely to fail. Resource allocation and negotiation involve competing interests, trust between parties, and judgment under uncertainty. In regulated industries, financial services, healthcare, any domain with fiduciary obligations, you can’t delegate these decisions to a system regardless of how good it is.

The Risks Worth Naming

Beyond the functional gaps, there are a few things worth noting:

Post-hoc rationalization?. Block cut 40% of its workforce in February 2026, before the essay was published. The stock jumped roughly 22%. Botha, who co-authored the essay, sits on Block’s board. Morgan Stanley upgraded Block to overweight after the cuts. Goldman Sachs raised its price target. It is fair to ask whether the intellectual framework followed the business decision or preceded it. That doesn’t make the argument wrong, but it does mean the incentive to believe the argument is quite strong for the people making it.

The flat structure graveyard. Zappos tried holacracy. Valve famously ran without managers. The Spotify model has been widely adopted and widely struggled with. These experiments didn’t fail because the idea was wrong in theory. They failed because removing formal structure doesn’t remove the need for coordination. It just moves coordination into informal channels, where it becomes invisible, political, and dependent on whoever has the most social capital. The information routing problem gets solved. The interpersonal and political problems get worse.

Data completeness. A world model built from Slack threads, Jira tickets, pull requests, and performance metrics reflects what was written down. A significant fraction of organizational knowledge is never written down. It lives in the judgment calls that didn’t make it into a doc, the context a senior engineer carries about why a system was built the way it was, the reason a decision was made three years ago that nobody remembers to explain to new people. The model sees the artifact. It doesn’t see the reasoning behind it.

Data quality and drift. This one is distinct from completeness and is arguably more dangerous. Information that was accurate when it entered the system becomes stale. The system continues to present it with the same authority as fresh data. Decisions get made on information that was true six months ago and isn’t anymore. You don’t see the error at the time. It shows up later, in ways that are very hard to trace back to their source.

This is a documented, recurring failure in knowledge management systems generally. It’s not theoretical. Platforms like Guru have built their core product differentiation specifically around the knowledge freshness problem because the industry learned, repeatedly, that drift is the default. Small errors accumulate in decisions that each look reasonable in isolation, until something downstream breaks in a way nobody can explain.

Regulatory reality. For companies operating in financial services or healthcare, the question of whether AI can replace decision-making isn’t just organizational. It’s legal. Explainability requirements, fair lending law, fiduciary duty, these don’t care how good your model is. Decision accountability cannot be delegated to a system in regulated domains. This alone may prevent this type of World Model from being used is certain industries.

Where This Is Actually Going

None of this means the Dorsey/Botha thesis is wrong. I just think it’s incomplete.

The informational cluster is being automated. That is a real and permanent shift. Managers who spent the majority of their time aggregating context, relaying status, and maintaining alignment across teams are already less necessary than they were. That part of the argument makes a lot of sense.

What’s more interesting is what happens to the other two clusters as this plays out. If information routing gets absorbed by AI systems, the interpersonal and decisional work doesn’t disappear. It becomes more visible and it’s value should be more obvious. Managers who survives this transition are the ones who were always doing the work that was hardest to put on a job description.

The open question is whether that work changes in character as organizations become more AI-instrumented, or if it simply becomes more prominent because everything else has been stripped away. Does managing people become fundamentally different when the coordination layer is automated? Or does it turn out that the relational, developmental, and judgment-intensive work was always the real job, and the information routing was just the overhead we confused for the substance?

I don’t think anyone knows yet. Block’s Q1 2026 results will be a first data point. If they hit $12.2 billion in gross profit with 40% fewer people, the thesis gets harder to argue with. If they don’t, their Roman Army comparisons will age badly.

Either way, the question is worth asking more carefully than “can AI replace the org chart.” The org chart was never the point. It was just the structure we built to solve three problems at once. AI can solve one of them. The other two? Let’s see.