The Ticket is Dead, Long live the Spec

The Core Shift: AI Collapses the Cost of Implementation, But Not the Cost of Deciding What to Build

As AI-assisted coding gains wider adoption, we need to look at the tools we use to manage the development lifecycle. Historically, the software world has lived in ticketing systems like Jira. This made sense when code was the bottleneck; Jira allowed us to define, plan, and track the manual labor of writing code. When the “act” of coding required significant time and resourcing, tracking velocity and story points provided a necessary although imperfect visibility into progress.

However, with AI now drastically reducing the effort required to generate code, our legacy metrics—points, velocity, and story size—are starting to break down. We have an opportunity to rethink the development process entirely.

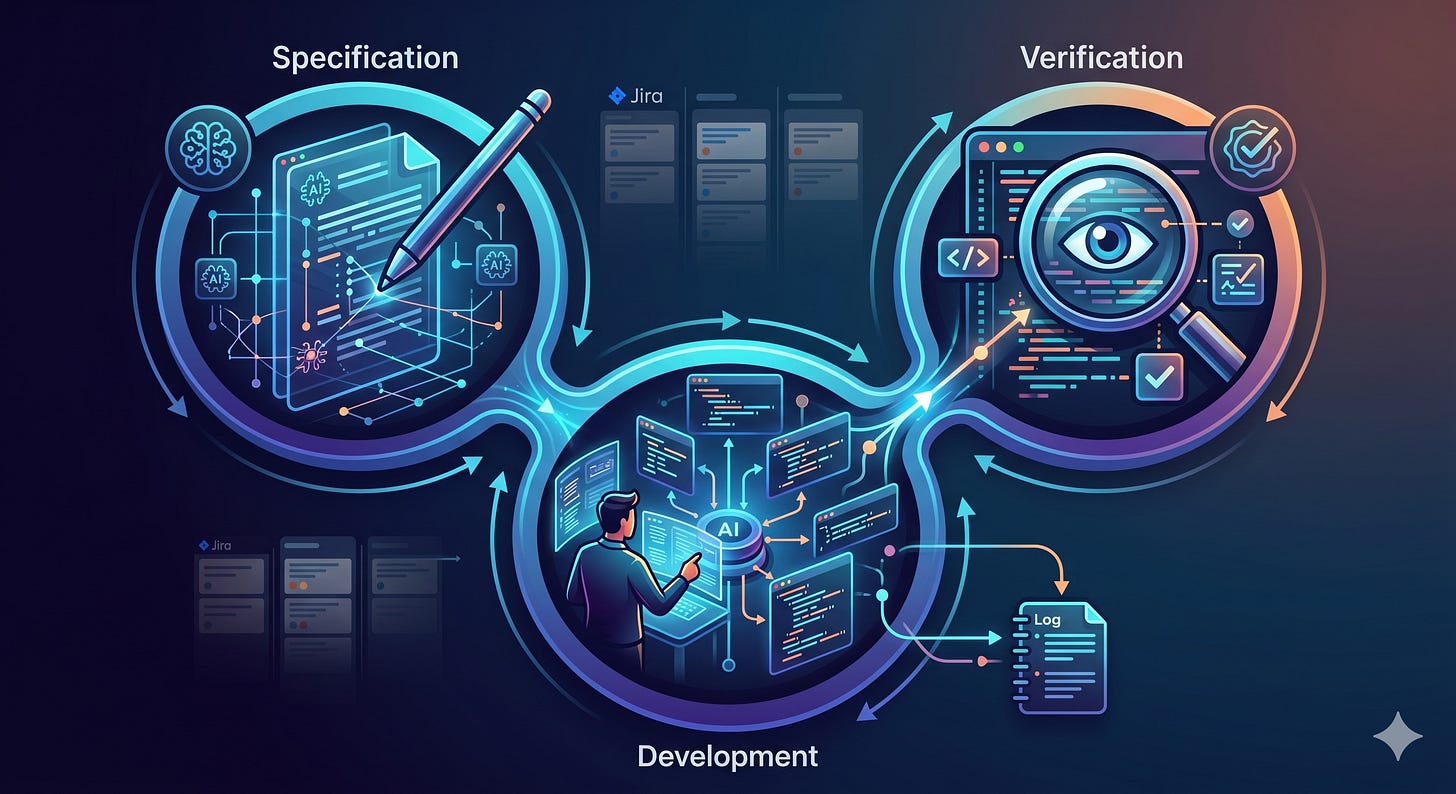

While bugs and features both require attention, the impact of AI is most profound in the feature development process. Automation is a double-edged sword: it helps you do the right thing quickly, but it also helps you do the wrong thing faster. To avoid the latter, we must shift our focus to three core phases: Specification, Development, and Verification.

Specification: Raising the Bar

In an AI-augmented workflow, the “size” of a ticket changes. We are no longer limited to small, rigidly defined bits of functionality. We can now deliver much larger chunks of work in a single pass. This shift, however, raises the bar for our upfront definitions.

In a Jira context, the ticket should serve primarily as a tracking mechanism that anchors the specification—whether that spec lives in the ticket itself or is linked via Notion or Confluence. The goal is to create a “source of truth” that defines the feature with enough clarity that an AI can execute it and, more importantly, so a human can verify the result. The planning and point-assigning “middle” of the process is becoming less relevant; the real work is now happening in definition and verification.

Development: Capturing the Thought Process

We cannot treat AI development as a “black box” where a spec goes in and code comes out. While AI-assisted coding frees engineers from the drudgery of syntax and manual typing, the engineer’s role as a “guide” is more critical than ever.

We need to capture the thought process behind the implementation. Tools like Claude Code and Cursor already allow us to export session data that details how an engineer navigated a problem, where the AI stumbled, and how it was corrected. By automatically appending these session summaries to Jira tickets, we can maintain a complete audit trail of the engineering logic without adding manual overhead for the developer.

Verification: The New Bottleneck

If AI has removed the bottleneck of writing code, it has moved it to verification. The temptation with AI tools is to move fast and “test it in production” to see if it works. This is backward.

Because of the potential for AI hallucinations and the risk of building on top of incompletely defined requirements, rigorous verification is now more important than it was in the manual era. If we accept poorly defined or unverified code into our codebase today, we are simply compounding technical debt at an accelerated rate.

Closing the Loop

This shift isn’t something we need to wait for Jira or other vendors to solve. You can implement this workflow today by using existing tools as simple tracking mechanisms for robust specifications and automated session logs.

Over time, I expect legacy ticketing features focused on granular implementation steps to be sunsetted or deprecated as the industry moves toward this new model. By focusing on the feedback loop—using AI to improve specifications and ensuring rigorous verification—we can ensure that “faster” also means “better.”